Reusing Existing Data

Reusing previously extracted data can speed up some agents dramatically. For example, if an agent downloads large files, it may be able to determine that some files have not changed, and then copy the files from the existing data rather than downloading the files again. This can be done by adding an Execute Script command and setting the script type to Copy Duplicate Data. The duplicate script will try and match extracted data with data that already exists in the internal database. If the extracted data already exists in the internal database, the existing data will be copied to the current data set and the agent will exit the container command.

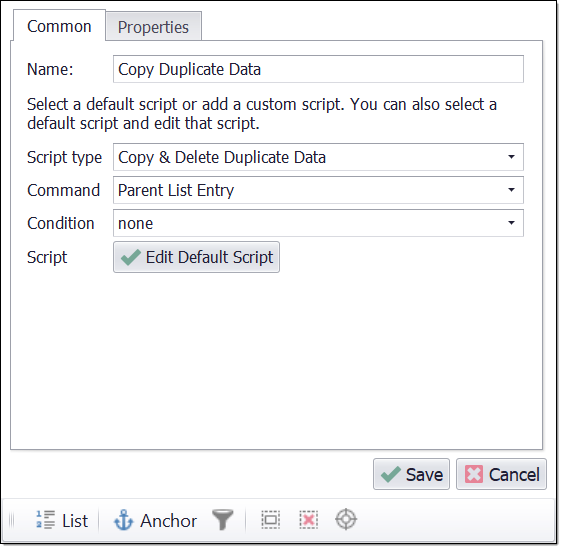

Execute Script command with script type set to Copy Duplicate Data.

The position of the duplicate script is important. The script will only try and match data that has already been extracted in the current container command, so data extracted by capture commands that are positioned after the script command will not be used. For example, if a container command has three capture commands that extract Title, Update Date and Download Large File, and the match check should be done on Title and Update Date only, then the duplicate script should be positioned after the capture commands that extract title and update date, but before the capture command that extracts the large file. Since the duplicate script exists the container command if the title and update date already exist, the Download Large File command will not be processed in that case.

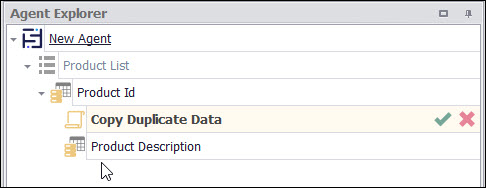

Copy file if title and update date has not changed

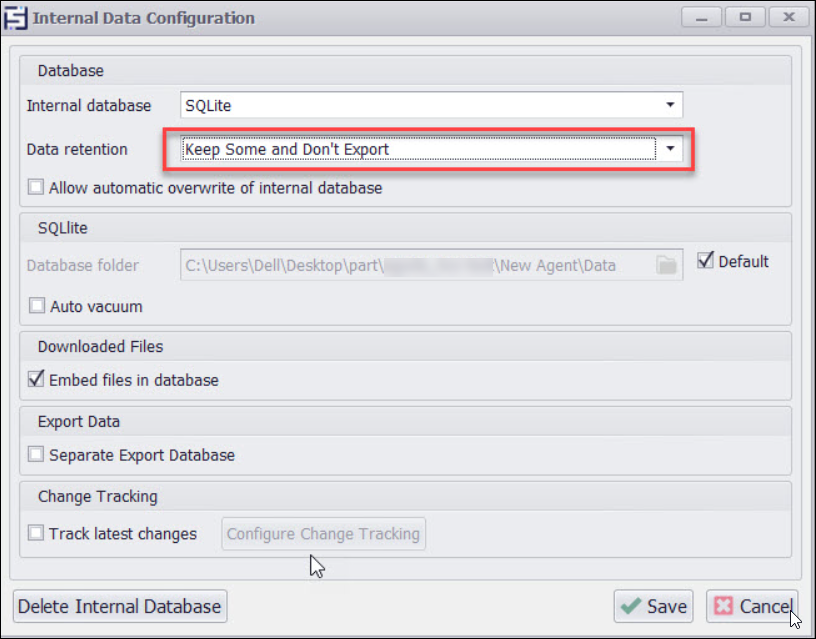

Since the default duplicate script will copy existing data if it has not changed, it's important the internal database is configured to keep previously extracted data. It's not necessary to keep more than one old data set, so the Old data option can be set to Keep Some and Don't Export which will only keep data from the last successful agent run, and it will only export the currently extracted data plus the old data the duplicate script copied into the current data set.

Internal database configured to keep some old data.