Extracting New Data Only

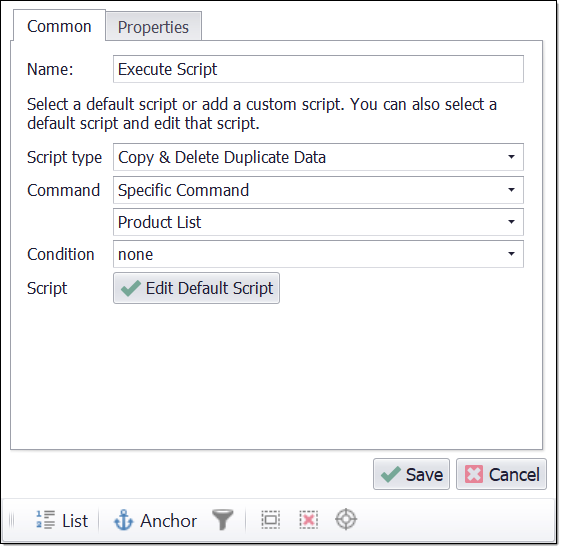

When extracting data from some websites, such as large forums, it's sometimes desirable to extract all data only once, and after that, just the new data added to the website since last time the agent ran. This can be done by adding an Execute Script command and setting the script type to Exit on Duplicate Data. The duplicate script will try and match extracted data with data that already exists in the internal database. If the extracted data already exists in the internal database, the agent knows there's no more new data available and exits.

It's important that data on the target website is sorted in a predictable way. For example, when extracting data from a forum, the forum posts are normally sorted by date, so when the agent reaches a post that was already extracted last time the agent ran, it knows that all other older posts would also have been previously extracted, so the agent can exit.

If the target website is not ordered in a predictable way, it not possible to extract only new data in this way, since the agent would have to go through all data on the website to make sure it's not missing any new data.

Default duplicate script configured to exit agent when match is found.

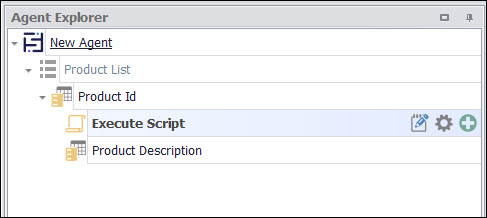

The position of the duplicate script is important. The script will only try and match data that has already been extracted in the current container command, so data extracted by capture commands that are positioned after the script command will not be used. For example, if a container command has three capture commands that extract Post Date, Post Title and Post Content, and the match check should be done on Product Date and Post Title only, then the duplicate script should be positioned after the capture commands that extract Post Date and Post Title, but before the capture command that extracts Post Content. If the match check should be done on all the data extracted in the container command, then the duplicate script should be positioned last in the container.

Check for match on Post Date and Post Title

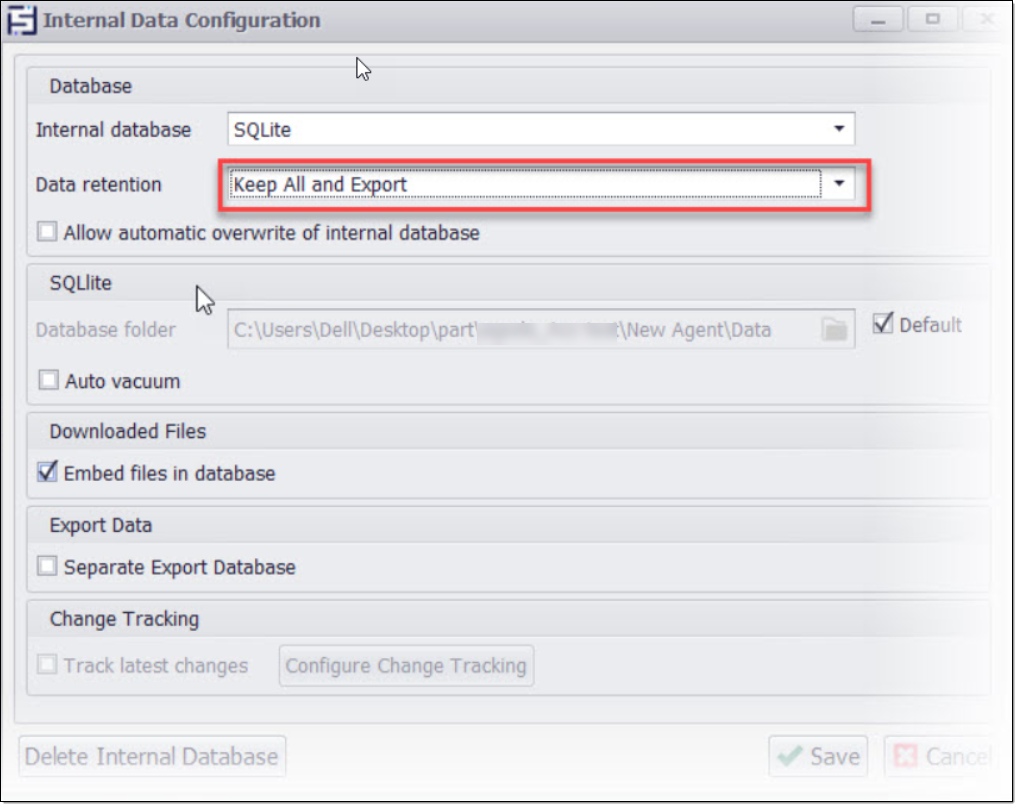

Since the agent will extract only new data, it's important the internal database is configured to keep all previously extracted data.

Internal database configured to keep all previously extracted data

.